However, this will impact performance when the data is first retrieved because the entire data set must be scanned (rather than just the first N results like with Quick select). Random sample: The database looks at every row in the data set and randomly returns records until it reaches the number of rows requested, making the sample more representative. While this is almost always a faster result than random sampling, it may return a biased sample (such as data for only one year rather than all years present in the data, if the records are sorted chronologically). This might be the first rows based on how the data is sorted, or the rows that the database had cached in memory from a previous query. Quick select: By default, the database returns the number of rows requested as quickly as possible.

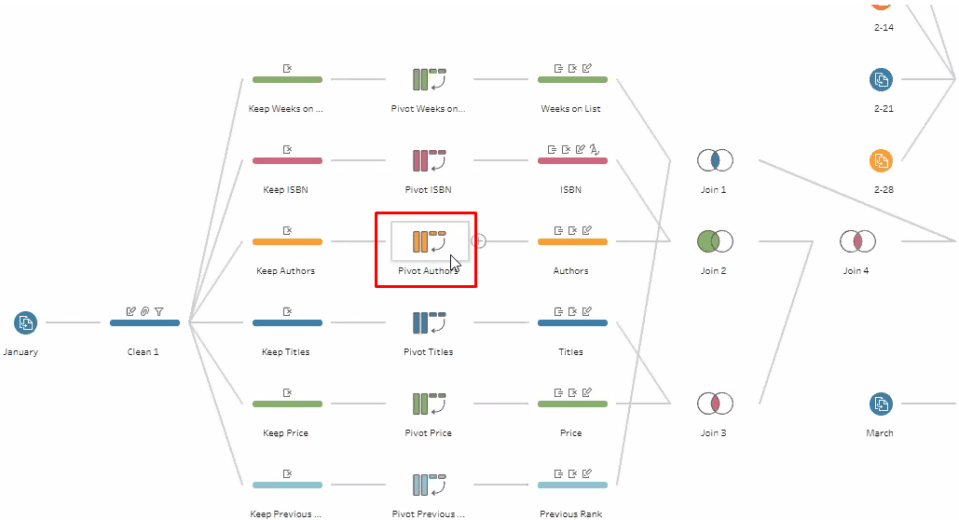

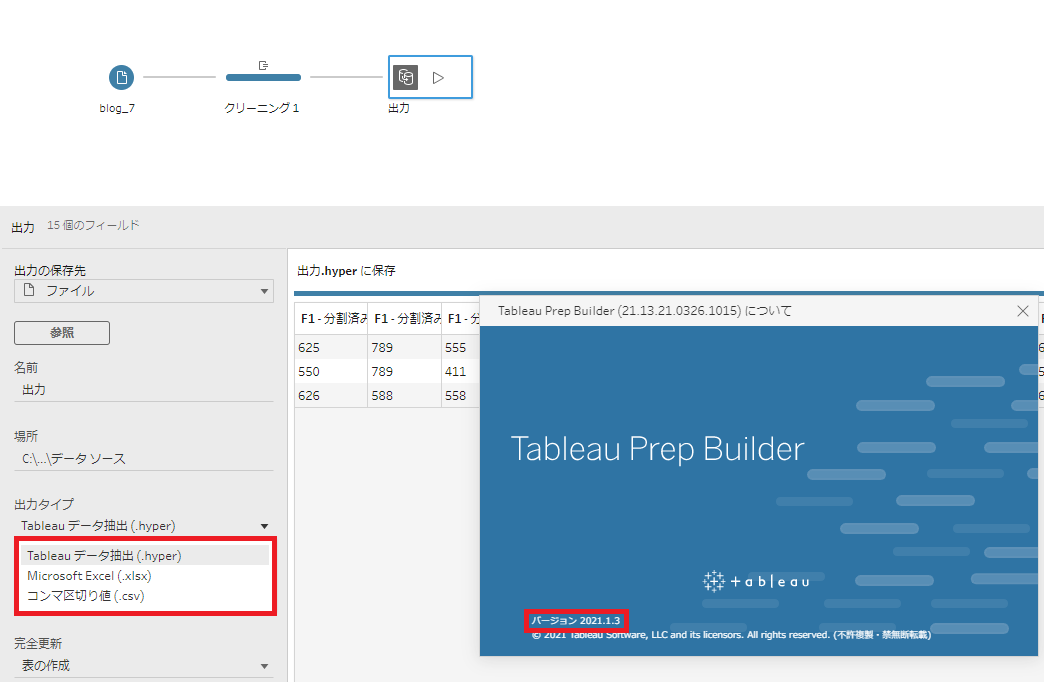

This option determines how the records are chosen from the data source. Use all data: If you don’t want the data to be sampled, you can select this option to force Tableau Prep to retrieve all rows in your data. This isn’t a fixed number of rows, rather how many records are returned depend on the characteristics of your data.įixed amount: Alternatively, you can specify a specific number of records to include in the sample, increasing or decreasing from the default. These settings are available on the Data Sample tab in the Input step:Īmount of Data: This option determines how much data is brought into the flow.ĭefault Sample Amount: The amount of data included in the default sample configuration. Once you’ve trimmed unnecessary fields and values from the data set, you may still want to change the amount of data in the sample or how the sample is generated. This will re-generate the sample, and the rest of your cleaning can be done on an optimized sample. Just make sure you go back to the Input step to make those adjustments. Let Tableau Prep generate a default sample, then use the profile pane to see which fields or values you could remove. If you’re not sure what you can filter or remove during the Input step, the profile pane is a great place to identify those changes. If you have a large data set and want to use random sampling, you can reduce your wait time by making these changes together, or prior to changing the sampling method to random. Tip: All changes made in the Input step will cause the data sample to be regenerated. By de-selecting the fields in the Input step, the data is never loaded into Tableau Prep, which improves performance and allows for a larger sample size. If I bring in flight data (used in the screenshots above), there are several fields that are mostly nulls, which I know I’m not going to use in my analysis. But if I filter the data at the Input step, the filter will be applied first, and I will get 150k records coming from 2015 into my sample. If I filter these records from the cleaning step, 100K rows will be removed after the data is sampled, leaving me with only 50K records from 2015. In the example below, I notice that my file has unwanted records from the year 2014. If you are filtering data to limit the values in a certain field, applying the filter in the Input step will improve performance and help you get more out of your sample. You want to generate a larger sample or use all of the data (there may be too many irregularities to clean the data effectively with a small sample).You want to generate an even smaller sample (you know the data well and want to streamline the prep experience as much as possible).

This is common when you have data that is ordered by date, or if you are using a wildcard union. the default settings only pulled data from 2005 when the data set covers 2005-2018).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed